Man has it been a busy week in Cyber. We had a 6 hour Facebook outage caused by an insecure protocol, a massive TwitchTV breach, Insider Threats, and more.

Facebook suffers 6 hour outage; the culprit? BGP

On Monday October 4th 2021 at around 1511UTC Facebook users started to suffer a wave of errors attempting to connect to any service sharing the Facebook CDN (eg. Facebook, Instagram, WhatsApp). There was a lot of confusion as the historically stalwart service gave users vague messages about their internet being down. I had several people in my neighborhood ask me if there was an internet outage (thanks Facebook).

The Rumor mill started flying, as it does, with people claiming anything from DNS to hackers. A few people even claimed the Facebook outage was actually orchestrated by the wealthy elites as a false flag operation to distract from the Pandora Papers.

There was a lot of confusion as people tried to figure out what was happening when Cloudflare released a detailed blog post outlining what they were seeing as a different CDN. Facebook also released a statement stating that a router configuration issue caused the outage. The misconfiguration? BGP.

What is BGP?

Border Gateway Protocol, or BGP for short, is the method by which edge Autonomous Service Networks (ASNs) advertise their presence to other ASNs. These ASNs make up the backbone of the internet by providing groupings of IP addresses and domains associated with a CDN. Facebook’s ASN, 32934, is a grouping of 453,989 domains and 381 IP addresses. In order for your computer to reach anything in this network it needs to know the route to get there. This is where BGP comes into play. Facebook’s CDN routers will advertise the route to it’s ASN. Without that BGP route set your computer doesn’t know how to get to anything in the Facebook ASN.

Why does it matter?

Normally I wouldn’t discuss a simple misconfiguration, but this one was interesting. Normally when services go down it’s a DNS issue, that’s why we have the joke “it’s always DNS”, but this time it was BGP. BGP is a notoriously vulnerable protocol since it relies on a trust model. Anyone with the right tools can manipulate the BGP routes and claim to be a more efficient route to an ASN. This is referred to as a BGP hijack.

BGP hijacking can allow the attacker to take complete control over the traffic destined for the ASN and send it to a malicious location. In 2018 Russian Cyber criminals used BGP hijacking to redirect traffic destined for a cryptocurrency website to their malicious domain and stole $152,000.

The Facebook outage is yet another reminder of how fragile the internet can be.

Facebook brings down Twitter, IsItDown, and others

A continuation of the above story:

The internet is a delicate place that’s highly interconnected and a part of the Facebook outage not heavily discussed is the effects that the Facebook outage had on the rest of the internet.

While the actual outage was limited exclusively to the Facebook CDN it had much wider impacts that could be seen across the entire internet. Shortly after Facebook went down sites like IsitDown, Down Detector, Down For Everyone or Just Me, and Is it Down Right Now also began experiencing issues. These issues were likely the result of a massive flood of people searching for updates on Facebook’s status.

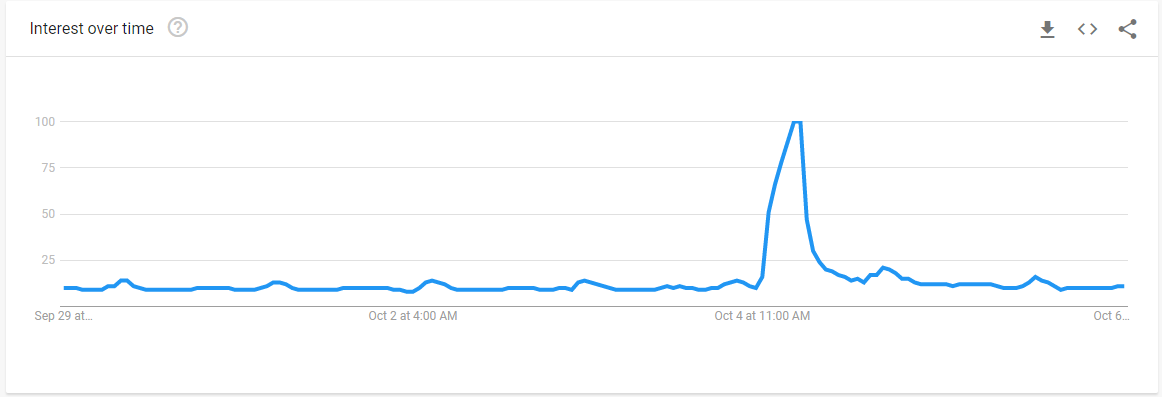

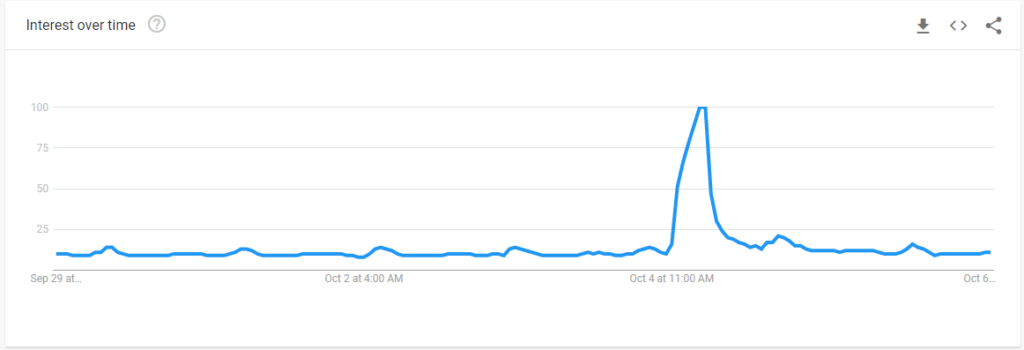

If you check Google’s trends for people searching Facebook you can clearly see a massive increase in search interest right around the time of the outage.

These searching included multiple searches for Facebook being down.

Twitter also experienced outages as users flocked to it venting their frustration about the Facebook outage. Google and Cloudflare both experienced issues with their DNS services.

#GoogleDNS 8.8.8.8 becomes much slower because of #Facebookdown and all the client retries. pic.twitter.com/4aTyFAykMq

— awlnx (@awlnx) October 4, 2021

Now, here’s the fun part. @Cloudflare runs a free DNS resolver, 1.1.1.1, and lots of people use it. So Facebook etc. are down… guess what happens? People keep retrying. Software keeps retrying. We get hit by a massive flood of DNS traffic asking for https://t.co/qq6U47Tjc6

— John Graham-Cumming (@jgrahamc) October 4, 2021

The massive flood of DNS traffic to these services cause significant slowdown in resolving speeds causing replies lengths to go up from 1/10th of a second to 1/4th and in some instances 1/3rd of a second. While 1/3rd of a second may not sound like an issue most services are used to getting replies near instantaneously so a major delay of 100-200ms can cause services to fail.

Thats why my monitoring started crying a lot. It couldn’t resolve some DNS fast enough.

— Dennis Ziolkowski 💻 (@DeZio91) October 4, 2021

Why did this happen?

In short, there are a lot of things all over the internet that need Facebook to function. These include things like Ads hosted by Facebook, websites that use Facebook for their comment sections, websites and apps that use Facebook for their logins, phone apps that send data back to Facebook, etc.

Even though Facebook was down, and most people knew it was down, that didn’t stop these services from continued attempts at connecting. These services would hit the DNS servers looking for Facebook and when they couldn’t reach it they would ramp up the speed at which they queries the DNS servers until it got an answer. In other words an app that may look up Facebook’s IP once every minute was suddenly attempting to lookup Facebook’s IP 20 times per second. When you expand this across the sheer number of apps and websites that have Facebook linkage and you can easily see how it could unintentionally DDoS a service.

Twitch TV breached

On October 6th internet troll message board 4chan posted a torrent of 125GBs of data related to Amazon-owned video game streaming platform Twitch TV. This breach allegedly includes the entirety of the Twitch TV source code, financial information of partnered streamers, private security tools used by the Twitch Security Operation Center (SOC), and information about Amazon’s idea at a Steam competitor. Not included in this breach is usernames, emails, password, or credit card information.

As people go though the data leaks more details of what’s included are coming out. One of the interesting things discovered in the security directory is a yml file containing SSH keys to the SOCs Incident Response Team’s SIFT workstation in AWS. An attacker could use these SSH keys to connect to the SIRTs SIFT workstation and potentially gain more information about systems being investigated.

The user who posted this stated that the leak was intended to “foster more disruption and competition in the online video streaming space,” and referred to this leak as “part 1” implying there is possibly more to come soon. Underground hacking forums are speculating that part 2 might include user details including password and will possibly be sold instead of given away free like part 1 was.

At this time, 0108 UTC 7 Oct 21, its still not clear exactly how this data was obtained. Members in the underground hacker forums have stated that data seems structured like it was taken from an internal company Wiki page rather than exfiltrated from a breach. This would imply an insider threat leaked the information rather than a hacker gaining access from the outside.

For it’s part Twitch confirmed the breach happened but did not provide any specifics.

We can confirm a breach has taken place. Our teams are working with urgency to understand the extent of this. We will update the community as soon as additional information is available. Thank you for bearing with us.

— Twitch (@Twitch) October 6, 2021

UPDATE: A few hours after the Twitter post Twitch released a blog post stating the breach was the result of a misconfiguration in a server which allowed a malicious Third party access content. Given how the bulk of Twitch is hosted on AWS we can probably reasonably imply that misconfiguration was publicly exposing an AWS S3 bucket to the world. These types of breaches are unfortunately all too common and “Security” Company UpGuard has based a lot of their reputation around the discovery of these accidently exposed S3 buckets including detailing their discovery of a classified US Government S3 bucket.

Insider Threats Abound

As October is Cybersecurity Awareness month I felt it pertinent to remind everyone of the importance of monitoring and preparing for insider threats. This month we’ve had several incidents of insider threats. I’m not going to cover them in depth as they aren’t actually related to cyber but I will list them.

- On 03 October 21 the ICIJ released information on the “Pandora Papers”. While yes the ICIJ obtained these papers over a year ago they only made the knowledge public on Sunday. The Pandora Papers are a massive database of 2.94TBs of unstructured data related to “hidden owners of offshore companies, secret banks accounts, private jets, yachts, mansions, and artwork by Picasso. The ICIJ understandably doesn’t disclose how they obtained this information but leaks like this are usually from an insider grabbing as much as they can and leaking it all.

- Facebook began fighting back against the Wallstreet Journal’s article of leaked internal company documents detailing the harmful effects of Instagram on teenage girls. These documents were provided to the Wallstreet Journal by a Facebook employee disgruntled with company policies.

Google Moves to Implement Mandatory 2FA for all Accounts

Google has finally decided to implement mandatory 2 Factor Authentication for all accounts. This is a topic I’ve written about in the past and was perplexed that Google didn’t make it mandatory. Well not Google intends to do just that to over 150 million accounts by the end of 2021.

MITRE TRAM Lives on

TRAM is a service created and maintained by the MITRE corporation to help automate the mapping of Cyber reporting to the techniques in the ATT&CK matrix. I’m a major fan of the ATT&CK Matrix but I hate the manual process of going through reporting and mapping what I read to the matrix. MITRE made TRAM back in 2019 but stopped updating the GitHub repository, until today.

Today, 6 Oct 21, the repository owner closed out 16 issues and archived the repository, linking instead to a new repository for the project. I immediately cloned the repository and tested it, and sure enough TRAM works again.

Apache Zero-Day

Apache had a major zero-day announced this week. CVE-2021-41773 allows attackers to use a path traversal attack to map URLs to files and potentially allows remote code execution. A path traversal attack works when a user modified the URL to point to other files on the system. URLs point to files stored on the local system, each page is a file usually located inside the /var/www/ directory on the system (for Apache2).

A path traversal attack allows users to enter a URL like blog.gravitywall.net/../../ to move upwards to directories outside the /var/www/ directory. If a path traversal is possible adding a ../ will move you up a directory, so /../../ will place an attacker in the / (root) directory on the system. From there an attacker could potentially put in a URL like blog.gravitywall.net/../../etc/passwd to display the passwd file of the system on in the web browser.

This was demonstrated on Twitter by PT Swarm:

🔥 We have reproduced the fresh CVE-2021-41773 Path Traversal vulnerability in Apache 2.4.49.

— PT SWARM (@ptswarm) October 5, 2021

If files outside of the document root are not protected by "require all denied" these requests can succeed.

Patch ASAP! https://t.co/6JrbayDbqG pic.twitter.com/AnsaJszPTE

Since a path traversal attack allows an attacker to access various files on the system it is possible to access the /bin/bash directory and pass a variable to it through the URL. Something similar to blog.gravitywall.net/../../bin/bash?echo=”something” might allow actual execution as you’re calling bash and passing a variable to it.

The Apache foundation has put out two patches to fix this vulnerability and Cloudflare as stated that their WAF will protect Apache servers that can’t be patched immediately.